Reality capture is no longer a one-time activity.

In large industrial environments, scan data is collected continuously — during shutdowns, inspections, upgrades and after plant changes. Over time, this creates a rich but complex dataset that reflects how the asset evolves.

At first glance, this seems like an advantage.

More data should mean better decisions.

But in practice, this is where scan data management becomes a real challenge.

When more data creates more uncertainty

As scan data accumulates, organisations begin to face a less obvious challenge:

- the same area exists in multiple versions

- datasets come from different time periods

- updates are partial and distributed across projects

And at some point, a critical question emerges:

Which version of reality is the correct one for this task?

This becomes critical when working with external contractors — especially in plant-change or retrofit projects.

Because in these scenarios, access to data is not enough.

Context is what makes data usable.

The operational impact of unclear scan data

When teams are unsure which dataset to use, they compensate in predictable ways:

- requesting more data than necessary

- manually verifying information

- working with assumptions instead of confirmed context

This leads to:

- slower project execution

- duplicated effort

- unnecessary data transfers

- increased risk of working on outdated information

In large organisations, this often becomes a hidden issue within broader scan data workflows.

Why traditional scan data management doesn’t scale

Most companies still rely on traditional approaches to scan data management, such as:

- exporting point clouds or meshes

- preparing data packages

- sharing via FTP, cloud storage or internal servers

While this works for small projects, it becomes inefficient at scale:

- every request requires manual preparation

- the same data is filtered multiple times

- there is limited visibility into what was shared and when

Over time, scan data management becomes harder to control — not easier.

A different approach: define the data scope

Instead of thinking in terms of files, leading organisations are starting to think in terms of data scope.

A data scope defines:

- where (specific area of the asset)

- when (specific scan sessions or time range)

- who (which users or teams have access)

This simple shift changes the way reality capture data is managed.

Instead of sharing everything “just in case”,

teams share only what is relevant for a specific task.

Why time-based filtering is critical in scan data workflows

Spatial selection is already standard in most tools.

But time is often missing from traditional scan data management processes.

In reality, industrial assets change constantly.

Without time context, even accurate scan data can become misleading.

Adding time as a filtering layer allows teams to:

- ensure data is up-to-date

- match datasets to project phases

- avoid costly design decisions based on outdated scans

For large-scale operations, this is not a feature — it’s a necessity.

Use case: plant change projects and external contractors

A common scenario in large organisations:

A contractor is hired to design a modification in a specific area of the plant.

The asset owner has:

- multiple scan campaigns of that area

- data collected over several years

- partial updates from different vendors

The contractor needs:

- only a specific part of the plant

- only the latest (or relevant) scan data

- clear and reliable input for design

Without structured scan data management, this leads to:

- oversized data packages

- confusion about which dataset to use

- additional back-and-forth communication

With a data-scope-based approach:

- only the required area is shared

- only relevant scan sessions are included

- the contractor works on clearly defined, decision-ready data

This significantly reduces friction and improves project efficiency.

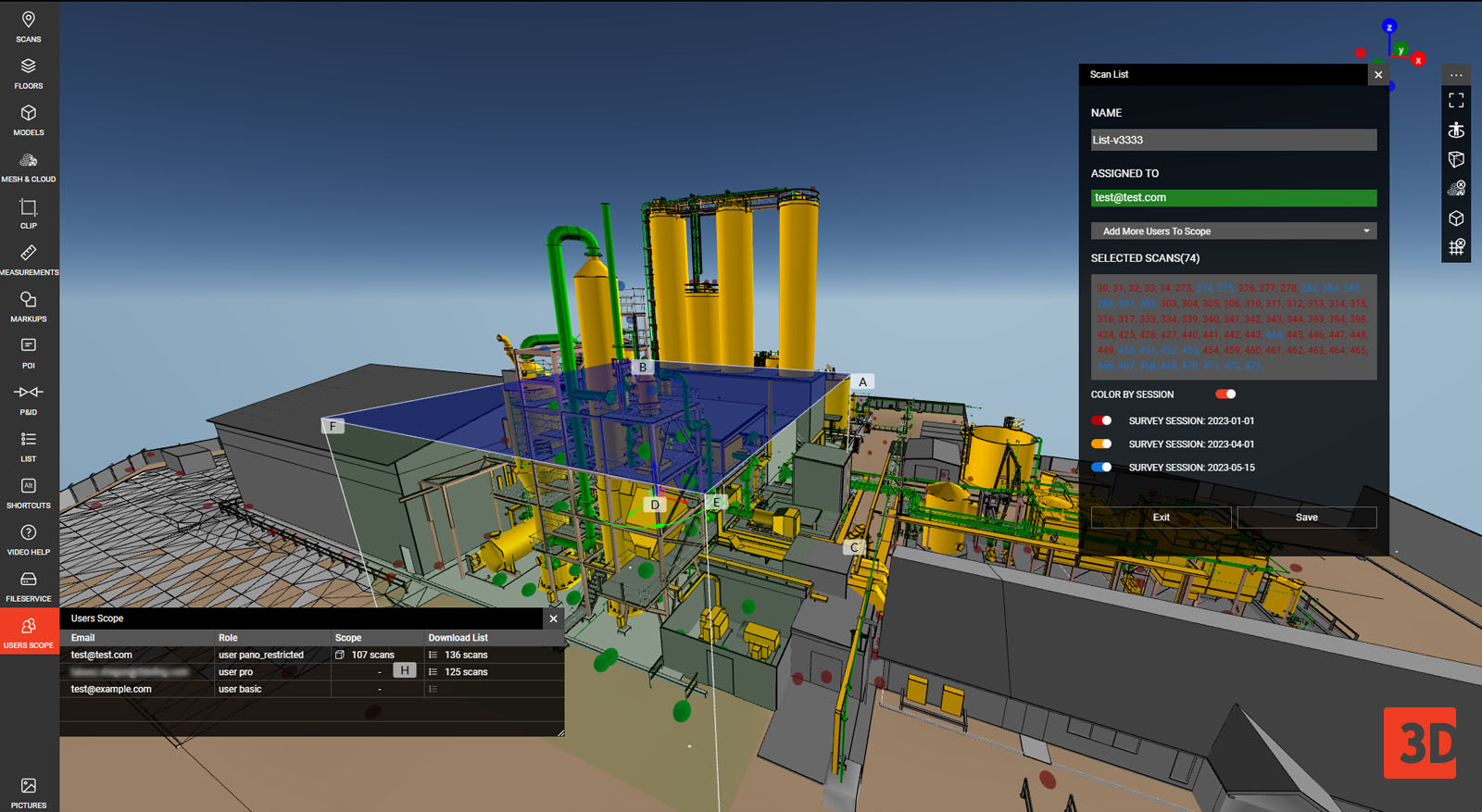

How WebPano supports modern scan data management

selective data sharing

Platforms like WebPano enable a more scalable approach to scan data by allowing teams to define and manage data scopes directly in a browser-based environment.

Instead of exporting and sending files, users can:

- select specific areas of the asset

- filter scan data by time (sessions)

- assign access to selected stakeholders

- review the dataset before sharing

This improves not only data sharing — but the entire engineering data collaboration workflow.

See how Selective Data Sharing works in practice

A more sustainable way to manage reality capture data

As reality capture becomes continuous,

the challenge is no longer how to collect data.

It’s how to:

- provide the right data

- to the right people

- at the right time

For organisations operating at scale, improving scan data management and sharing workflows can lead to:

- better collaboration with contractors

- reduced project delays

- greater confidence in engineering decisions

Because ultimately,

data only creates value when it is clear, relevant and trusted.

Want to improve scan data management in your organisation?

If you are dealing with:

- multiple scan datasets across time

- complex contractor workflows

- challenges in controlling data access

it may be worth exploring how a modern approach to scan data management can support your operations.

Book a demo or get in touch to see how WebPano helps large organisations manage and share reality data at scale.